Springboot 集成 Kafka

Kafka 安装与配置

安装

Kafka 安装指南

配置

Kafka 配置笔记

ZK & Kafka 配置与启动

ZK 配置

ZK 使用 Kafka 自带的 ZK,使用默认的配置文件

ZK 启动

1

2

3

4

5

6

| 1# 前台启动命令

2bin/zookeeper-server-start.sh config/zookeeper.properties

3# 后台启动命令

4nohup bin/zookeeper-server-start.sh config/zookeeper.properties

5

6 |

Kafka 配置

kafka 修改的配置如下

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

| 1############################## Server Basics #############################

2# The id of the broker. This must be set to a unique integer for each broker.

3broker.id=0

4############################# Socket Server Settings #############################

5# The address the socket server listens on. It will get the value returned from

6# java.net.InetAddress.getCanonicalHostName() if not configured.

7# FORMAT:

8# listeners = listener_name://host_name:port

9# EXAMPLE:

10# listeners = PLAINTEXT://your.host.name:9092

11#listeners=PLAINTEXT://:9092

12### 修改处,listeners表示非kafka集群内的机器访问kafka

13listeners=PLAINTEXT://192.168.239.147:9092

14# Hostname and port the broker will advertise to producers and consumers. If not set,

15# it uses the value for "listeners" if configured. Otherwise, it will use the value

16# returned from java.net.InetAddress.getCanonicalHostName().

17#advertised.listeners=PLAINTEXT://your.host.name:9092

18### 修改处,节点的主机名会通知给生产者和消费者

19advertised.listeners=PLAINTEXT://192.168.239.147:9092

20

21 |

kafka 启动命令

1

2

3

4

5

6

| 1# 前台启动命令

2bin/kafka-server-start.sh config/server.properties

3# 后台启动命令

4bin/kafka-server-start.sh -daemon config/server.properties

5

6 |

Springboot 项目配置

生产者

pom.xml

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

| 1<?xml version="1.0" encoding="UTF-8"?>

2<project xmlns="http://maven.apache.org/POM/4.0.0"

3 xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

4 xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

5 <modelVersion>4.0.0</modelVersion>

6

7 <groupId>top.simba1949</groupId>

8 <artifactId>kafka-02-producer</artifactId>

9 <version>1.0-SNAPSHOT</version>

10

11 <dependencyManagement>

12 <dependencies>

13 <dependency>

14 <groupId>io.spring.platform</groupId>

15 <artifactId>platform-bom</artifactId>

16 <version>Cairo-RELEASE</version>

17 <type>pom</type>

18 <scope>import</scope>

19 </dependency>

20 </dependencies>

21 </dependencyManagement>

22

23 <dependencies>

24 <dependency>

25 <groupId>org.springframework.boot</groupId>

26 <artifactId>spring-boot-starter</artifactId>

27 </dependency>

28 <dependency>

29 <groupId>org.springframework.boot</groupId>

30 <artifactId>spring-boot-starter-web</artifactId>

31 </dependency>

32 <dependency>

33 <groupId>org.springframework.boot</groupId>

34 <artifactId>spring-boot-starter-test</artifactId>

35 <scope>test</scope>

36 </dependency>

37 <dependency>

38 <groupId>org.springframework.boot</groupId>

39 <artifactId>spring-boot-devtools</artifactId>

40 <optional>true</optional> <!-- 表示依赖不会传递 -->

41 </dependency>

42 <dependency>

43 <groupId>org.projectlombok</groupId>

44 <artifactId>lombok</artifactId>

45 <version>1.18.4</version>

46 <scope>provided</scope>

47 </dependency>

48 <dependency>

49 <groupId>org.springframework.boot</groupId>

50 <artifactId>spring-boot-starter-actuator</artifactId>

51 </dependency>

52 <dependency>

53 <groupId>com.alibaba</groupId>

54 <artifactId>fastjson</artifactId>

55 <version>1.2.51</version>

56 </dependency>

57 <dependency>

58 <groupId>org.springframework.kafka</groupId>

59 <artifactId>spring-kafka</artifactId>

60 </dependency>

61 </dependencies>

62 <build>

63 <plugins>

64 <!--编译插件-->

65 <plugin>

66 <groupId>org.apache.maven.plugins</groupId>

67 <artifactId>maven-compiler-plugin</artifactId>

68 <configuration>

69 <!-- 配置使用的 jdk 版本 -->

70 <target>1.8</target>

71 <source>1.8</source>

72 </configuration>

73 </plugin>

74 <!--springboot-maven打包插件-->

75 <plugin>

76 <groupId>org.springframework.boot</groupId>

77 <artifactId>spring-boot-maven-plugin</artifactId>

78 </plugin>

79 <!--资源拷贝插件-->

80 <plugin>

81 <groupId>org.apache.maven.plugins</groupId>

82 <artifactId>maven-resources-plugin</artifactId>

83 <configuration>

84 <encoding>UTF-8</encoding>

85 </configuration>

86 </plugin>

87 <!--实现热部署插件-->

88 <plugin>

89 <groupId>org.springframework.boot</groupId>

90 <artifactId>spring-boot-maven-plugin</artifactId>

91 <configuration>

92 <fork>true</fork> <!-- 如果没有该配置,devtools不会生效 -->

93 </configuration>

94 </plugin>

95 </plugins>

96 <!--IDEA是不会编译src的java目录的xml文件,如果需要读取,则需要手动指定哪些配置文件需要读取-->

97 <resources>

98 <resource>

99 <directory>src/main/java</directory>

100 <includes>

101 <include>**/*</include>

102 </includes>

103 </resource>

104 <resource>

105 <directory>src/main/resources</directory>

106 <includes>

107 <include>**/*</include>

108 </includes>

109 </resource>

110 </resources>

111 </build>

112</project>

113

114 |

配置文件

1

2

3

4

5

6

7

8

9

10

11

12

13

| 1server:

2 port: 8091

3spring:

4 kafka:

5 bootstrap-servers: 192.168.239.147:9092

6 producer:

7 batchSize: 1

8 bufferMemory: 102400

9 key-serializer: org.apache.kafka.common.serialization.StringSerializer

10 value-serializer: org.apache.kafka.common.serialization.StringSerializer

11 retries: 3

12

13 |

启动类

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

| 1package top.simba1949;

2

3import org.springframework.boot.SpringApplication;

4import org.springframework.boot.autoconfigure.SpringBootApplication;

5

6/**

7 * @author SIMBA1949

8 * @date 2019/1/18 22:16

9 */

10@SpringBootApplication

11public class Application {

12 public static void main(String[] args) {

13 SpringApplication.run(Application.class, args);

14 }

15}

16

17 |

DTO

BaseDto.java

1

2

3

4

5

6

7

8

9

10

11

12

13

| 1package top.simba1949.common;

2

3import java.io.Serializable;

4

5/**

6 * @author SIMBA1949

7 * @date 2019/1/19 8:29

8 */

9public class BaseDto implements Serializable {

10 private static final long serialVersionUID = -2422619315901989324L;

11}

12

13 |

MessageDto.java

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

| 1package top.simba1949.common;

2

3import lombok.Data;

4import java.util.Date;

5

6/**

7 * @author SIMBA1949

8 * @date 2019/1/19 8:00

9 */

10@Data

11public class MessageDto<T> {

12

13 private static final long serialVersionUID = -8235914195331898424L;

14

15 private Long id;

16 private T body;

17 private Date sendTime;

18}

19

20 |

UserDto.java

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

| 1package top.simba1949.common;

2

3import lombok.Data;

4

5/**

6 * @author SIMBA1949

7 * @date 2019/1/19 8:03

8 */

9@Data

10public class UserDto {

11 private static final long serialVersionUID = -7780254430276793521L;

12

13 private Long id;

14 private String username;

15 private String password;

16}

17

18 |

KafkaProducerController.java

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

| 1package top.simba1949.controller;

2

3import com.alibaba.fastjson.JSON;

4import org.slf4j.Logger;

5import org.slf4j.LoggerFactory;

6import org.springframework.beans.factory.annotation.Autowired;

7import org.springframework.kafka.core.KafkaTemplate;

8import org.springframework.util.concurrent.ListenableFuture;

9import org.springframework.web.bind.annotation.*;

10import top.simba1949.common.MessageDto;

11import top.simba1949.common.UserDto;

12

13/**

14 * @author SIMBA1949

15 * @date 2019/1/18 22:17

16 */

17@RestController

18@RequestMapping

19public class KafkaProducerController {

20 private Logger logger = LoggerFactory.getLogger(KafkaProducerController.class);

21 @Autowired

22 private KafkaTemplate kafkaTemplate;

23

24 @GetMapping("{name}/send")

25 public String send(@PathVariable("name")String name, String msg){

26 logger.info("get value: name is " + name + " & msg is " + msg);

27

28 ListenableFuture listenableFuture = kafkaTemplate.send("topic04", msg);

29 listenableFuture.addCallback(result -> {

30 logger.info("success" + result.toString());

31 }, ex -> {

32 logger.info("failure" + ex.toString());

33 });

34

35 return "DONE";

36 }

37

38 @PostMapping("dto")

39 public String sendDto(@RequestBody MessageDto<UserDto> msg){

40 logger.info("the POJO's value is " + msg.toString());

41 String msgStr = JSON.toJSONString(msg);

42 ListenableFuture listenableFuture = kafkaTemplate.send("topic05", msgStr);

43 listenableFuture.addCallback(result -> {

44 logger.info("success" + result.toString());

45 }, ex -> {

46 logger.info("failure" + ex.toString());

47 });

48 return "DONE";

49 }

50}

51

52 |

消费者

pom.xml

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

| 1<?xml version="1.0" encoding="UTF-8"?>

2<project xmlns="http://maven.apache.org/POM/4.0.0"

3 xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

4 xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

5 <parent>

6 <artifactId>Springboot-Kafka</artifactId>

7 <groupId>top.simba1949</groupId>

8 <version>1.0-SNAPSHOT</version>

9 </parent>

10 <modelVersion>4.0.0</modelVersion>

11

12 <artifactId>kafka-02-consumer</artifactId>

13

14 <dependencyManagement>

15 <dependencies>

16 <dependency>

17 <groupId>io.spring.platform</groupId>

18 <artifactId>platform-bom</artifactId>

19 <version>Cairo-RELEASE</version>

20 <type>pom</type>

21 <scope>import</scope>

22 </dependency>

23 </dependencies>

24 </dependencyManagement>

25

26 <dependencies>

27 <dependency>

28 <groupId>org.springframework.boot</groupId>

29 <artifactId>spring-boot-starter</artifactId>

30 </dependency>

31 <dependency>

32 <groupId>org.springframework.boot</groupId>

33 <artifactId>spring-boot-starter-web</artifactId>

34 </dependency>

35 <dependency>

36 <groupId>org.springframework.boot</groupId>

37 <artifactId>spring-boot-starter-test</artifactId>

38 <scope>test</scope>

39 </dependency>

40 <dependency>

41 <groupId>org.springframework.boot</groupId>

42 <artifactId>spring-boot-devtools</artifactId>

43 <optional>true</optional> <!-- 表示依赖不会传递 -->

44 </dependency>

45 <dependency>

46 <groupId>org.projectlombok</groupId>

47 <artifactId>lombok</artifactId>

48 <version>1.18.4</version>

49 <scope>provided</scope>

50 </dependency>

51 <dependency>

52 <groupId>org.springframework.boot</groupId>

53 <artifactId>spring-boot-starter-actuator</artifactId>

54 </dependency>

55 <dependency>

56 <groupId>com.alibaba</groupId>

57 <artifactId>fastjson</artifactId>

58 <version>1.2.51</version>

59 </dependency>

60 <dependency>

61 <groupId>org.springframework.kafka</groupId>

62 <artifactId>spring-kafka</artifactId>

63 </dependency>

64 </dependencies>

65 <build>

66 <plugins>

67 <!--编译插件-->

68 <plugin>

69 <groupId>org.apache.maven.plugins</groupId>

70 <artifactId>maven-compiler-plugin</artifactId>

71 <configuration>

72 <!-- 配置使用的 jdk 版本 -->

73 <target>1.8</target>

74 <source>1.8</source>

75 </configuration>

76 </plugin>

77 <!--springboot-maven打包插件-->

78 <plugin>

79 <groupId>org.springframework.boot</groupId>

80 <artifactId>spring-boot-maven-plugin</artifactId>

81 </plugin>

82 <!--资源拷贝插件-->

83 <plugin>

84 <groupId>org.apache.maven.plugins</groupId>

85 <artifactId>maven-resources-plugin</artifactId>

86 <configuration>

87 <encoding>UTF-8</encoding>

88 </configuration>

89 </plugin>

90 <!--实现热部署插件-->

91 <plugin>

92 <groupId>org.springframework.boot</groupId>

93 <artifactId>spring-boot-maven-plugin</artifactId>

94 <configuration>

95 <fork>true</fork> <!-- 如果没有该配置,devtools不会生效 -->

96 </configuration>

97 </plugin>

98 </plugins>

99 <!--IDEA是不会编译src的java目录的xml文件,如果需要读取,则需要手动指定哪些配置文件需要读取-->

100 <resources>

101 <resource>

102 <directory>src/main/java</directory>

103 <includes>

104 <include>**/*</include>

105 </includes>

106 </resource>

107 <resource>

108 <directory>src/main/resources</directory>

109 <includes>

110 <include>**/*</include>

111 </includes>

112 </resource>

113 </resources>

114 </build>

115</project>

116

117 |

配置文件

1

2

3

4

5

6

7

8

9

10

11

| 1server:

2 port: 8081

3spring:

4 kafka:

5 bootstrap-servers: 192.168.239.147:9092

6 consumer:

7 groupId: group01

8 key-serializer: org.apache.kafka.common.serialization.StringSerializer

9 value-serializer: org.apache.kafka.common.serialization.StringSerializer

10

11 |

启动类

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

| 1package top.simba1949;

2

3import org.springframework.boot.SpringApplication;

4import org.springframework.boot.autoconfigure.SpringBootApplication;

5

6/**

7 * @author SIMBA1949

8 * @date 2019/1/18 22:28

9 */

10@SpringBootApplication

11public class Application {

12 public static void main(String[] args) {

13 SpringApplication.run(Application.class, args);

14 }

15}

16

17 |

DTO 类

和生成者DTO类一致,尤其注意系列化 ID

监听类

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

| 1package top.simba1949.service;

2

3import com.alibaba.fastjson.JSON;

4import org.apache.kafka.clients.consumer.ConsumerRecord;

5import org.slf4j.Logger;

6import org.slf4j.LoggerFactory;

7import org.springframework.kafka.annotation.KafkaListener;

8import org.springframework.stereotype.Service;

9import top.simba1949.common.MessageDto;

10

11import java.util.Optional;

12

13/**

14 * @author SIMBA1949

15 * @date 2019/1/18 22:29

16 */

17@Service

18public class KafkaConsumerServiceImpl {

19 private Logger logger = LoggerFactory.getLogger(KafkaConsumerServiceImpl.class);

20

21 @KafkaListener(topics = {"topic04"})

22 public void print(ConsumerRecord record){

23 Optional<Object> kafkaMessage = Optional.ofNullable(record.value());

24 if (kafkaMessage.isPresent()){

25 Object msg = kafkaMessage.get();

26 logger.info("record " + record);

27 logger.info("msg " + msg);

28 }

29 }

30

31 @KafkaListener(topics = {"topic05"})

32 public void getDto(ConsumerRecord record){

33 Optional<Object> kafkaMessage = Optional.ofNullable(record.value());

34 if (kafkaMessage.isPresent()){

35 String msgStr = (String) kafkaMessage.get();

36 MessageDto realMsg = JSON.parseObject(msgStr, MessageDto.class);

37 logger.info("record " + record);

38 logger.info("msg " + realMsg.toString());

39 }

40 }

41}

42

43 |

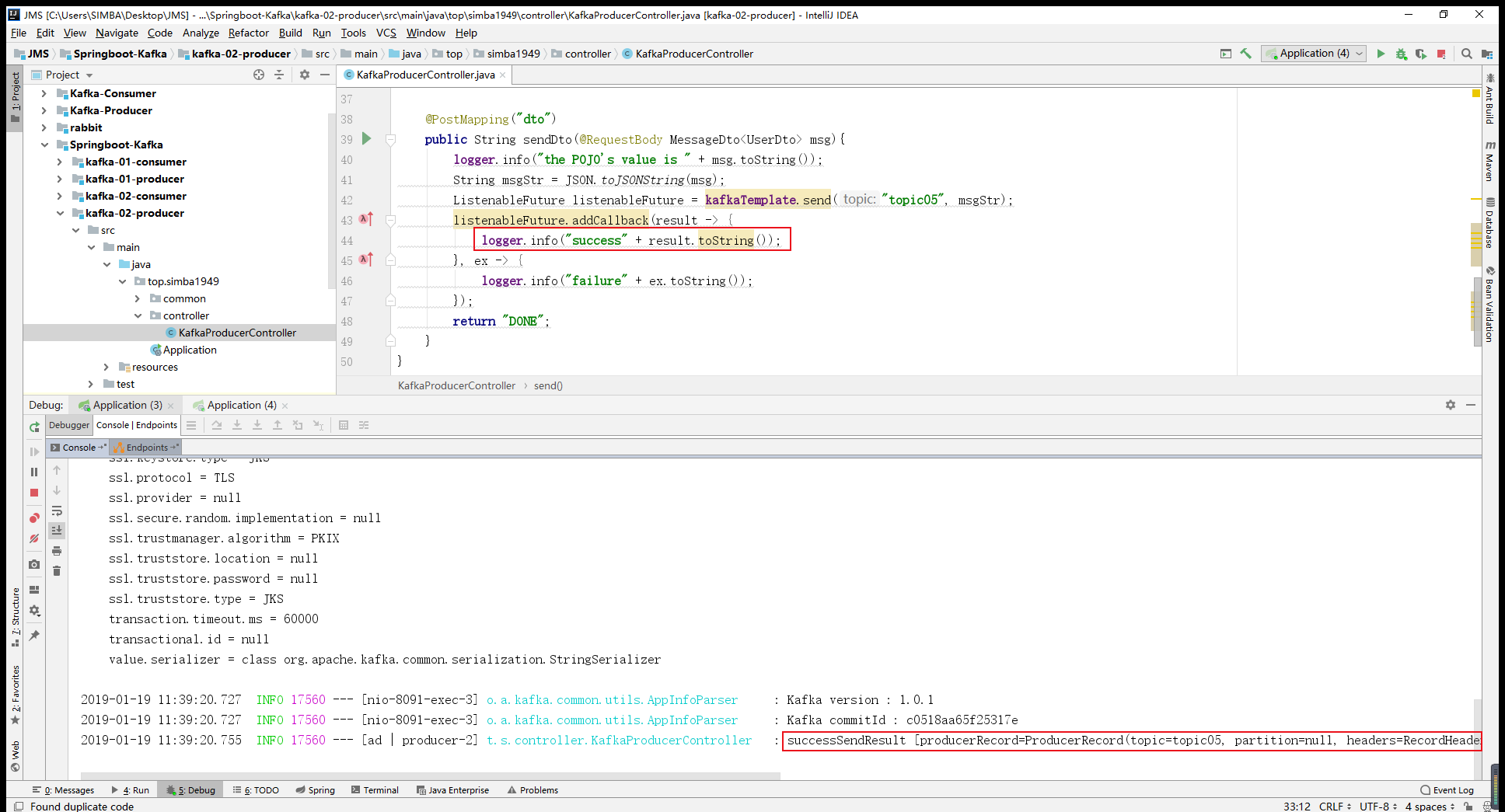

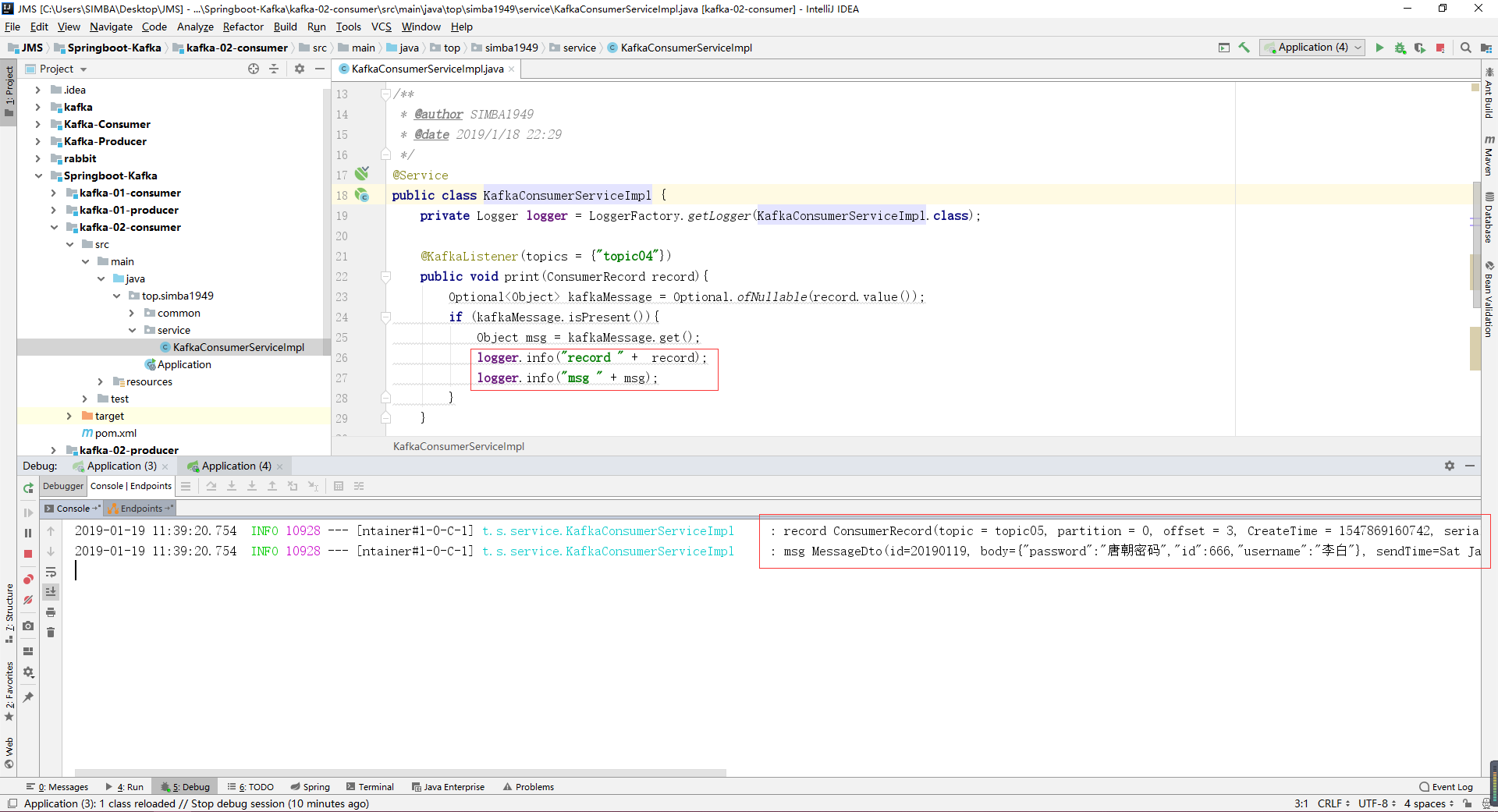

测试如下

生产者

消费者

附录

Kafka 原配置文件

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

| 1# Licensed to the Apache Software Foundation (ASF) under one or more

2# contributor license agreements. See the NOTICE file distributed with

3# this work for additional information regarding copyright ownership.

4# The ASF licenses this file to You under the Apache License, Version 2.0

5# (the "License"); you may not use this file except in compliance with

6# the License. You may obtain a copy of the License at

7#

8# http://www.apache.org/licenses/LICENSE-2.0

9#

10# Unless required by applicable law or agreed to in writing, software

11# distributed under the License is distributed on an "AS IS" BASIS,

12# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

13# See the License for the specific language governing permissions and

14# limitations under the License.

15

16# see kafka.server.KafkaConfig for additional details and defaults

17

18############################# Server Basics #############################

19

20# The id of the broker. This must be set to a unique integer for each broker.

21broker.id=0

22

23############################# Socket Server Settings #############################

24

25# The address the socket server listens on. It will get the value returned from

26# java.net.InetAddress.getCanonicalHostName() if not configured.

27# FORMAT:

28# listeners = listener_name://host_name:port

29# EXAMPLE:

30# listeners = PLAINTEXT://your.host.name:9092

31#listeners=PLAINTEXT://:9092

32

33# Hostname and port the broker will advertise to producers and consumers. If not set,

34# it uses the value for "listeners" if configured. Otherwise, it will use the value

35# returned from java.net.InetAddress.getCanonicalHostName().

36#advertised.listeners=PLAINTEXT://your.host.name:9092

37

38# Maps listener names to security protocols, the default is for them to be the same. See the config documentation for more details

39#listener.security.protocol.map=PLAINTEXT:PLAINTEXT,SSL:SSL,SASL_PLAINTEXT:SASL_PLAINTEXT,SASL_SSL:SASL_SSL

40

41# The number of threads that the server uses for receiving requests from the network and sending responses to the network

42num.network.threads=3

43

44# The number of threads that the server uses for processing requests, which may include disk I/O

45num.io.threads=8

46

47# The send buffer (SO_SNDBUF) used by the socket server

48socket.send.buffer.bytes=102400

49

50# The receive buffer (SO_RCVBUF) used by the socket server

51socket.receive.buffer.bytes=102400

52

53# The maximum size of a request that the socket server will accept (protection against OOM)

54socket.request.max.bytes=104857600

55

56

57############################# Log Basics #############################

58

59# A comma separated list of directories under which to store log files

60log.dirs=/tmp/kafka-logs

61

62# The default number of log partitions per topic. More partitions allow greater

63# parallelism for consumption, but this will also result in more files across

64# the brokers.

65num.partitions=1

66

67# The number of threads per data directory to be used for log recovery at startup and flushing at shutdown.

68# This value is recommended to be increased for installations with data dirs located in RAID array.

69num.recovery.threads.per.data.dir=1

70

71############################# Internal Topic Settings #############################

72# The replication factor for the group metadata internal topics "__consumer_offsets" and "__transaction_state"

73# For anything other than development testing, a value greater than 1 is recommended for to ensure availability such as 3.

74offsets.topic.replication.factor=1

75transaction.state.log.replication.factor=1

76transaction.state.log.min.isr=1

77

78############################# Log Flush Policy #############################

79

80# Messages are immediately written to the filesystem but by default we only fsync() to sync

81# the OS cache lazily. The following configurations control the flush of data to disk.

82# There are a few important trade-offs here:

83# 1. Durability: Unflushed data may be lost if you are not using replication.

84# 2. Latency: Very large flush intervals may lead to latency spikes when the flush does occur as there will be a lot of data to flush.

85# 3. Throughput: The flush is generally the most expensive operation, and a small flush interval may lead to excessive seeks.

86# The settings below allow one to configure the flush policy to flush data after a period of time or

87# every N messages (or both). This can be done globally and overridden on a per-topic basis.

88

89# The number of messages to accept before forcing a flush of data to disk

90#log.flush.interval.messages=10000

91

92# The maximum amount of time a message can sit in a log before we force a flush

93#log.flush.interval.ms=1000

94

95############################# Log Retention Policy #############################

96

97# The following configurations control the disposal of log segments. The policy can

98# be set to delete segments after a period of time, or after a given size has accumulated.

99# A segment will be deleted whenever *either* of these criteria are met. Deletion always happens

100# from the end of the log.

101

102# The minimum age of a log file to be eligible for deletion due to age

103log.retention.hours=168

104

105# A size-based retention policy for logs. Segments are pruned from the log unless the remaining

106# segments drop below log.retention.bytes. Functions independently of log.retention.hours.

107#log.retention.bytes=1073741824

108

109# The maximum size of a log segment file. When this size is reached a new log segment will be created.

110log.segment.bytes=1073741824

111

112# The interval at which log segments are checked to see if they can be deleted according

113# to the retention policies

114log.retention.check.interval.ms=300000

115

116############################# Zookeeper #############################

117

118# Zookeeper connection string (see zookeeper docs for details).

119# This is a comma separated host:port pairs, each corresponding to a zk

120# server. e.g. "127.0.0.1:3000,127.0.0.1:3001,127.0.0.1:3002".

121# You can also append an optional chroot string to the urls to specify the

122# root directory for all kafka znodes.

123zookeeper.connect=localhost:2181

124

125# Timeout in ms for connecting to zookeeper

126zookeeper.connection.timeout.ms=6000

127

128

129############################# Group Coordinator Settings #############################

130

131# The following configuration specifies the time, in milliseconds, that the GroupCoordinator will delay the initial consumer rebalance.

132# The rebalance will be further delayed by the value of group.initial.rebalance.delay.ms as new members join the group, up to a maximum of max.poll.interval.ms.

133# The default value for this is 3 seconds.

134# We override this to 0 here as it makes for a better out-of-the-box experience for development and testing.

135# However, in production environments the default value of 3 seconds is more suitable as this will help to avoid unnecessary, and potentially expensive, rebalances during application startup.

136group.initial.rebalance.delay.ms=0

137

138 |